Quality-Diversity through AI Feedback

ICLR 2024 (Thu 9 May 10:45 CEST) [Halle B #76]

Abstract

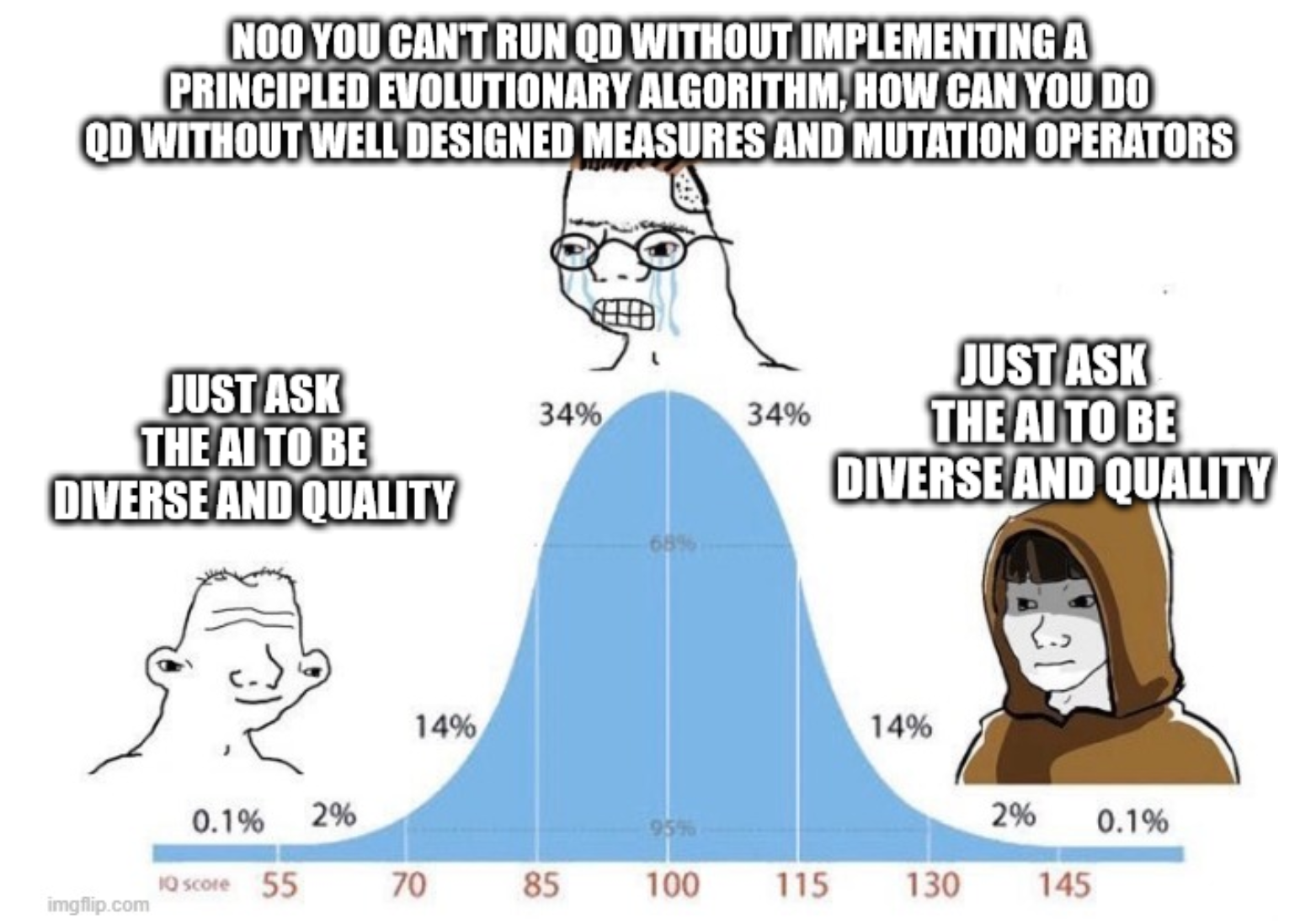

In many text-generation problems, users may prefer not only a single response, but a diverse range of high-quality outputs from which to choose. Quality-diversity (QD) search algorithms aim at such outcomes, by continually improving and diversifying a population of candidates. However, the applicability of QD to qualitative domains, like creative writing, has been limited by the difficulty of algorithmically specifying measures of quality and diversity. Interestingly, recent developments in language models (LMs) have enabled guiding search through AI feedback, wherein LMs are prompted in natural language to evaluate qualitative aspects of text. Leveraging this development, we introduce Quality-Diversity through AI Feedback (QDAIF), wherein an evolutionary algorithm applies LMs to both generate variation and evaluate the quality and diversity of candidate text. When assessed on creative writing domains, QDAIF covers more of a specified search space with high-quality samples than do non-QD controls. Further, human evaluation of QDAIF-generated creative texts validates reasonable agreement between AI and human evaluation. Our results thus highlight the potential of AI feedback to guide open-ended search for creative and original solutions, providing a recipe that seemingly generalizes to many domains and modalities. In this way, QDAIF is a step towards AI systems that can independently search, diversify, evaluate, and improve, which are among the core skills underlying human society's capacity for innovation.

Method

Experimental results

QDAIF outperforms baselines in creative writing domains such as opinion-writing (with diverse sentiment), stories (with diverse genres and endings), and poetry (with diverse genres and tones). Performance is measured by QD score, or how well the archive is filled with diverse high-quality solutions. Human evaluation verified that AI feedback is practically aligned with human feedback, and that humans also found sets of elite solutions from QDAIF to be more diverse and higher-quality than those from baselines.

Animated: QDAIF illuminates the archive quicker than the baseline, with baseline converging to miss certain parts of the search space. After displaying a snapshot of example stories, QDAIF continues to fill more bins with solutions.

Conclusion

In conclusion, we show that QDAIF is a promising approach to open-ended search that can reveal unexplored creative writing spaces, surpassing alternative text generation methods in generating diverse high-quality natural language text. AI feedback, Evolution through Large Models (ELM), and quality-diversity search (QD) were found to be essential ingredients for enhanced AI systems that can innovate in subjective spaces, and seemingly generalizes to use in multimodal AI. We see many possibilities from QDAIF to build creative search systems with evaluation, diversification, and improvement capabilities, bringing us closer to AI that can support and extend human innovation.

Citation

@article{bradley2023quality,

title={Quality-Diversity through AI Feedback},

author={Herbie Bradley and Andrew Dai and Hannah Teufel and Jenny Zhang and Koen Oostermeijer and Marco Bellagente and Jeff Clune and Kenneth Stanley and Grégory Schott and Joel Lehman},

year={2023},

journal={arXiv preprint arXiv:2310.13032},

}

Acknowledgements

We thank Robert Baldock, Samuel Weinbach, Souradeep Nanda, Jan Zierstek, and Andres Felipe Cruz Salinas for insightful discussions and feedback within the lab at Aleph Alpha. We also thank Katherine Hardgrave, David Nugent, Daniel Flood, and Formula Trinity Autonomous for the inspiration that seeded the momentum leading up to this work.

The website template was borrowed from Jon Barron. Inspired by OMNI page.